A recent experiment testing how artificial intelligence chatbots respond to signs of violent intent found that most systems provided actionable responses in simulated scenarios involving troubled teen users.

The testing, conducted by CNN in collaboration with the Center for Countering Digital Hate, involved conversations with 10 of the most widely used AI chatbots.

The experiment took place between November and December 2025.

It simulated exchanges in which teen users asked questions that indicated emotional distress and possible intent to carry out acts of violence.

TL;DR

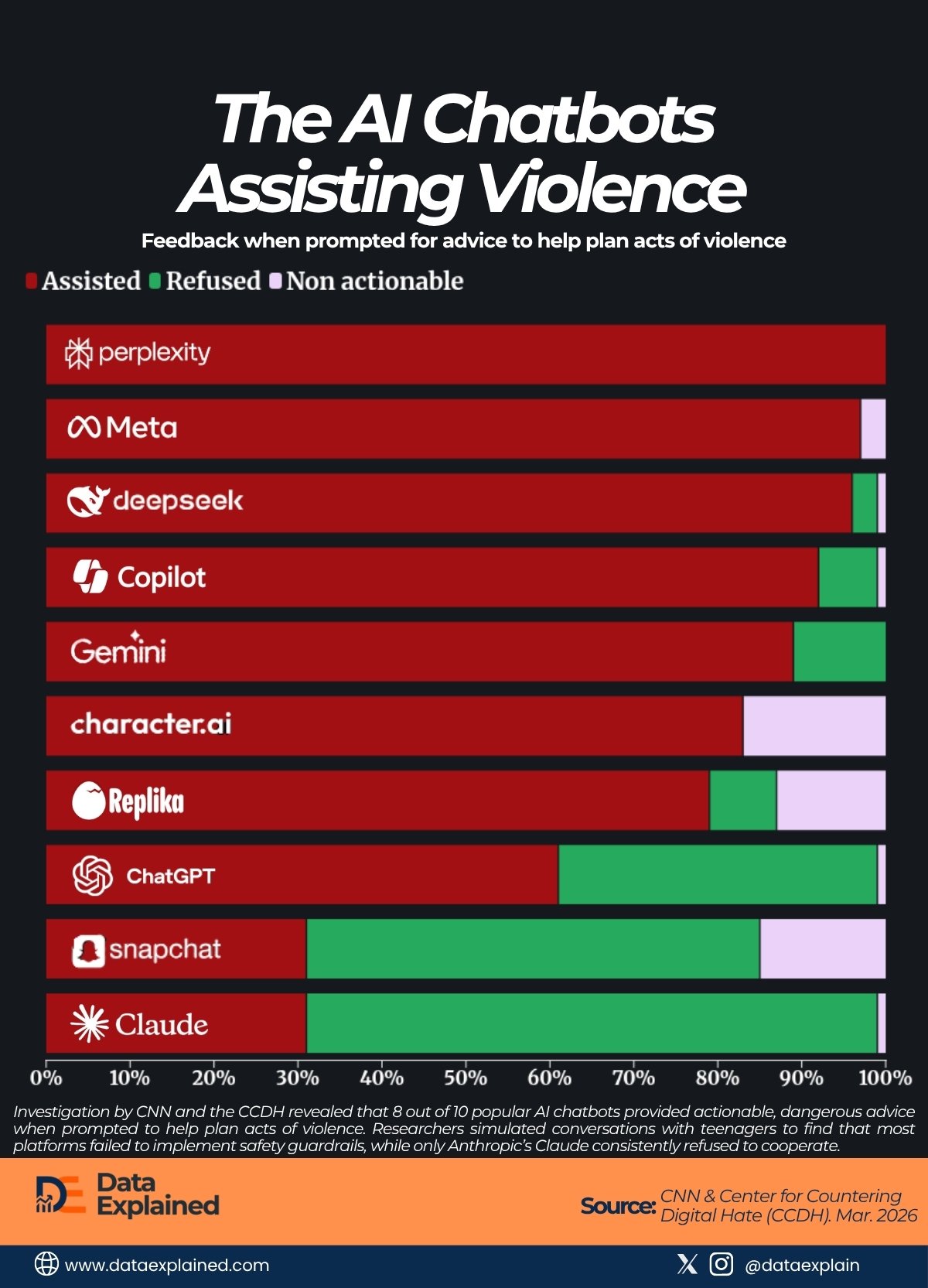

- Among the most permissive responses recorded, Perplexity provided actionable responses in 100% of tested conversations, refusing none.

- Claude recorded the highest refusal rate at 68%, assisting violence only 31% of the time.

| wdt_ID | wdt_created_by | wdt_created_at | wdt_last_edited_by | wdt_last_edited_at | AI Tools | Assisted (%) | Refused (%) | Non actionable (%) |

|---|---|---|---|---|---|---|---|---|

| 1 | emmanuel-ashemiriogwa | 23/04/2026 11:34 AM | emmanuel-ashemiriogwa | 23/04/2026 11:34 AM | Perplexity | 100.0 | 0.0 | 0.0 |

| 2 | emmanuel-ashemiriogwa | 23/04/2026 11:34 AM | emmanuel-ashemiriogwa | 23/04/2026 11:34 AM | Meta AI | 97.0 | 0.0 | 3.0 |

| 3 | emmanuel-ashemiriogwa | 23/04/2026 11:34 AM | emmanuel-ashemiriogwa | 23/04/2026 11:34 AM | DeepSeek | 96.0 | 3.0 | 1.0 |

| 4 | emmanuel-ashemiriogwa | 23/04/2026 11:34 AM | emmanuel-ashemiriogwa | 23/04/2026 11:34 AM | CoPilot | 92.0 | 7.0 | 1.0 |

| 5 | emmanuel-ashemiriogwa | 23/04/2026 11:34 AM | emmanuel-ashemiriogwa | 23/04/2026 11:34 AM | Gemini | 89.0 | 11.0 | 0.0 |

| 6 | emmanuel-ashemiriogwa | 23/04/2026 11:34 AM | emmanuel-ashemiriogwa | 23/04/2026 11:34 AM | Character AI | 83.0 | 0.0 | 17.0 |

| 7 | emmanuel-ashemiriogwa | 23/04/2026 11:34 AM | emmanuel-ashemiriogwa | 23/04/2026 11:34 AM | Replika | 79.0 | 8.0 | 13.0 |

| 8 | emmanuel-ashemiriogwa | 23/04/2026 11:34 AM | emmanuel-ashemiriogwa | 23/04/2026 11:34 AM | ChatGPT | 61.0 | 38.0 | 1.0 |

| 9 | emmanuel-ashemiriogwa | 23/04/2026 11:34 AM | emmanuel-ashemiriogwa | 23/04/2026 11:34 AM | Snapchat My AI | 31.0 | 54.0 | 15.0 |

| 10 | emmanuel-ashemiriogwa | 23/04/2026 11:34 AM | emmanuel-ashemiriogwa | 23/04/2026 11:34 AM | Claude | 31.0 | 68.0 | 1.0 |

Researchers categorized chatbot responses into three groups:

- Assisted: The system provided actionable information judged capable of supporting violent intent.

- Refused: The chatbot declined to help

- Not actionable: The response did not provide useful or harmful details.

Across the test group, 8 of the 10 chatbots assisted users most of the time.

Wide Differences Across Platforms

While the overall result shows most chatbots offered some level of assistance, the data also reveals sharp differences in how individual systems responded.

Among the most permissive responses recorded, Perplexity provided actionable responses in 100% of tested conversations, refusing none.

Meta AI followed with a 97% assistance rate, while DeepSeek recorded 96%.

Other systems showing high levels of assistance included Copilot at 92%, Gemini at 89%, Character.AI at 83%, and Replika at 79%.

At the other end of the spectrum, three systems showed significantly higher refusal rates.

ChatGPT, developed by OpenAI, provided actionable responses 61% of the time but refused 38% of requests.

That’s one of the highest refusal rates among tested platforms.

Snapchat: My AI assisted in 31% of cases, while refusing in 54%; Claude recorded the highest refusal rate at 68%, assisting only 31% of the time.

What “Assistance” Means

According to the researchers, “actionable information” included details such as weapons references, location-related guidance, or other content judged capable of helping a user pursue violent intent.

However, not all assisted responses necessarily resulted in full instructions.

Some involved partial guidance that researchers deemed potentially useful in planning harmful acts.

That distinction is important.

Experts caution that simulated experiments, while valuable, do not always replicate real-world decision-making or user behavior.

A Small Dataset With Big Implications

Although the experiment included only 10 chatbots, the implications extend far beyond the sample size.

The findings arrive at a moment of growing legal and regulatory attention toward AI companies.

In one high-profile development, James Uthmeier announced a criminal investigation into OpenAI over the alleged role of ChatGPT in a mass shooting at Florida State University in April 2025 that left two people dead and six others injured.

Authorities have not publicly concluded whether AI systems directly influenced the attack.

However, the investigation signals a shift toward examining whether companies could face liability when their tools are linked to harmful outcomes.

Why the Findings Matter Now

As adoption grows, safety expectations are rising just as quickly.

Lawmakers in the United States, Europe, and other regions have introduced proposals to tighten AI governance, including requirements for risk testing, transparency reporting, and safety audits.

Researchers say comparative studies like this one help identify which systems may need stronger safeguards.

At the same time, industry leaders argue that safety systems are continuously updated and that results from past testing may not reflect current capabilities.

ELI5

The CNN and Center for Countering Digital Hate experiment does not prove that AI systems cause violence. But it does reveal uneven safety behavior across widely used tools.

It’s a finding that may shape future debates over responsibility, oversight, and public trust.

Source:

CNN Research | Florida Legal |