As the Pentagon threatens to sever its contract with Anthropic and declare the AI company a “supply chain risk,” market data reveals why the clash has become so heated.

Two-thirds of all military artificial intelligence spending goes directly to combat platforms designed to engage and destroy targets.

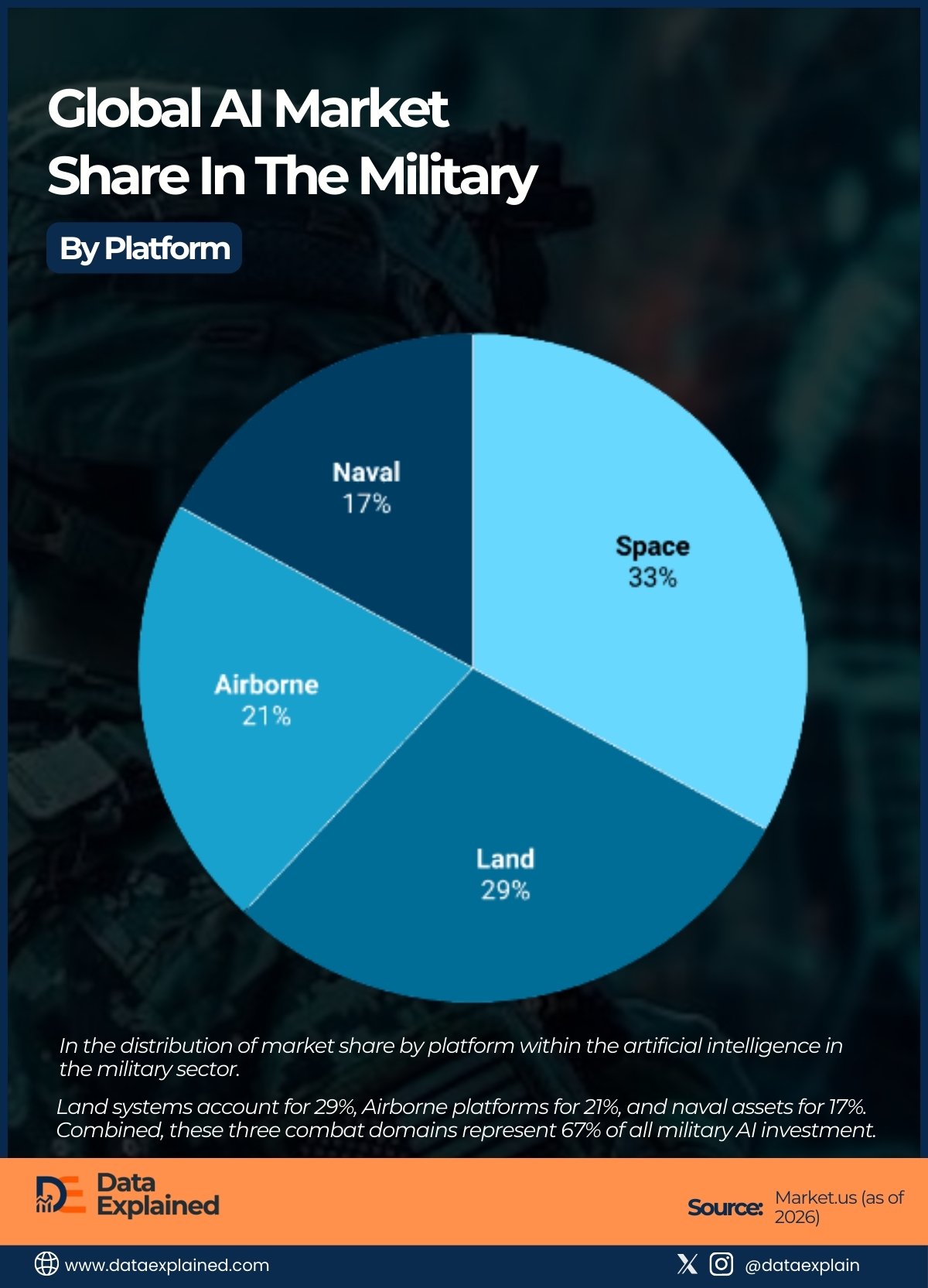

The graphic above shows the global artificial intelligence market share in the military by platform.

The data comes from Market.us Scoop.

As you can see, land systems account for 29%, Airborne platforms for 21%, and naval assets for 17%. Combined, these three combat domains represent 67% of all military AI investment.

TL;DR

- 67% of military AI spending targets combat platforms, validating Anthropic’s concerns about autonomous weapons and making restrictions incompatible with the Pentagon’s strategy.

- 54% of military AI operates on platforms without human presence (Space and Airborne), creating ideal conditions for fully autonomous systems to select and engage targets independently.

The numbers explain the standoff

Defense Secretary Pete Hegseth wants unrestricted access to AI models like Anthropic’s Claude across all military applications.

Anthropic refuses. The company is particularly concerned about Claude being used for mass domestic surveillance or to develop fully autonomous weapons (systems that can select and engage targets without human oversight).

Today’s chart shows Anthropic’s fears aren’t abstract. They’re quantifiable.

Land-based military AI (the 29% slice) includes autonomous ground vehicles, border surveillance systems, and monitoring networks that can easily pivot from battlefield applications to domestic security.

This is exactly where “mass domestic surveillance” becomes a tangible risk rather than a hypothetical concern.

Airborne platforms, at 21%, encompass drones and unmanned aircraft increasingly capable of independent operation.

These are the systems most likely to become fully autonomous weapons if AI restrictions are lifted.

Naval systems, though only 17%, include autonomous submarines and surface vessels that operate in environments where communication delays make human-in-the-loop decision-making difficult.

Together, these combat platforms account for 67 cents of every dollar spent on military AI globally.

Space dominates, but differently

The chart’s most striking finding may be that Space commands 33% of military AI spending (more than any other single domain).

Yet space applications rarely dominate public discussions about military AI ethics.

This category includes satellite systems, missile defense, early warning networks, and anti-satellite weapons.

While strategically crucial in the race against China, space-based AI is generally less controversial because it doesn’t directly target people on the ground.

Still, even space systems raise concerns.

Surveillance satellites powered by unrestricted AI could enable the kind of mass monitoring Anthropic fears, tracking individuals globally without oversight or accountability.

An autonomous threshold

More than half of the military AI market (54%) combines Space and Airborne platforms.

These share a crucial characteristic: humans aren’t physically present on the platform.

When AI operates a satellite or drone, there’s no pilot or crew making real-time decisions. This creates conditions ideal for fully autonomous operation, where AI systems identify threats, select responses, and execute actions without meaningful human control.

This is Anthropic’s nightmare scenario, and the data shows it’s not a small corner of the military AI market. It’s the majority.

Why the Pentagon won’t budge

The nearly even distribution across domains reveals the Pentagon’s strategic problem.

There’s no dominant platform. Military superiority requires AI deployed everywhere simultaneously.

Restrictions on any single domain create gaps that adversaries can exploit.

- If Claude won’t work on land systems, competitors might field superior autonomous ground forces.

- If it can’t power drones, air superiority erodes.

- If naval applications are off-limits, undersea warfare suffers.

From the Pentagon’s perspective, Anthropic’s restrictions don’t just limit one application. They undermine the entire strategy to integrate AI across all warfare domains faster and more effectively than China.

The threat to declare Anthropic a “supply chain risk” reflects this calculation.

A company that won’t provide unrestricted access is, by definition, an unreliable partner in a technological arms race where every domain matters.

The precedent problem

Three other leading AI labs (reportedly including OpenAI, Google’s DeepMind, and xAI’s Grok) are watching closely.

They’re negotiating their own Pentagon contracts and deliberating internally about acceptable terms.

If Anthropic holds firm and loses its Pentagon contract, other companies learn the cost of resistance. If Anthropic caves and lifts restrictions, they face pressure to do the same.

Someone has to blink. The data shows why neither side wants to be first.

Source: