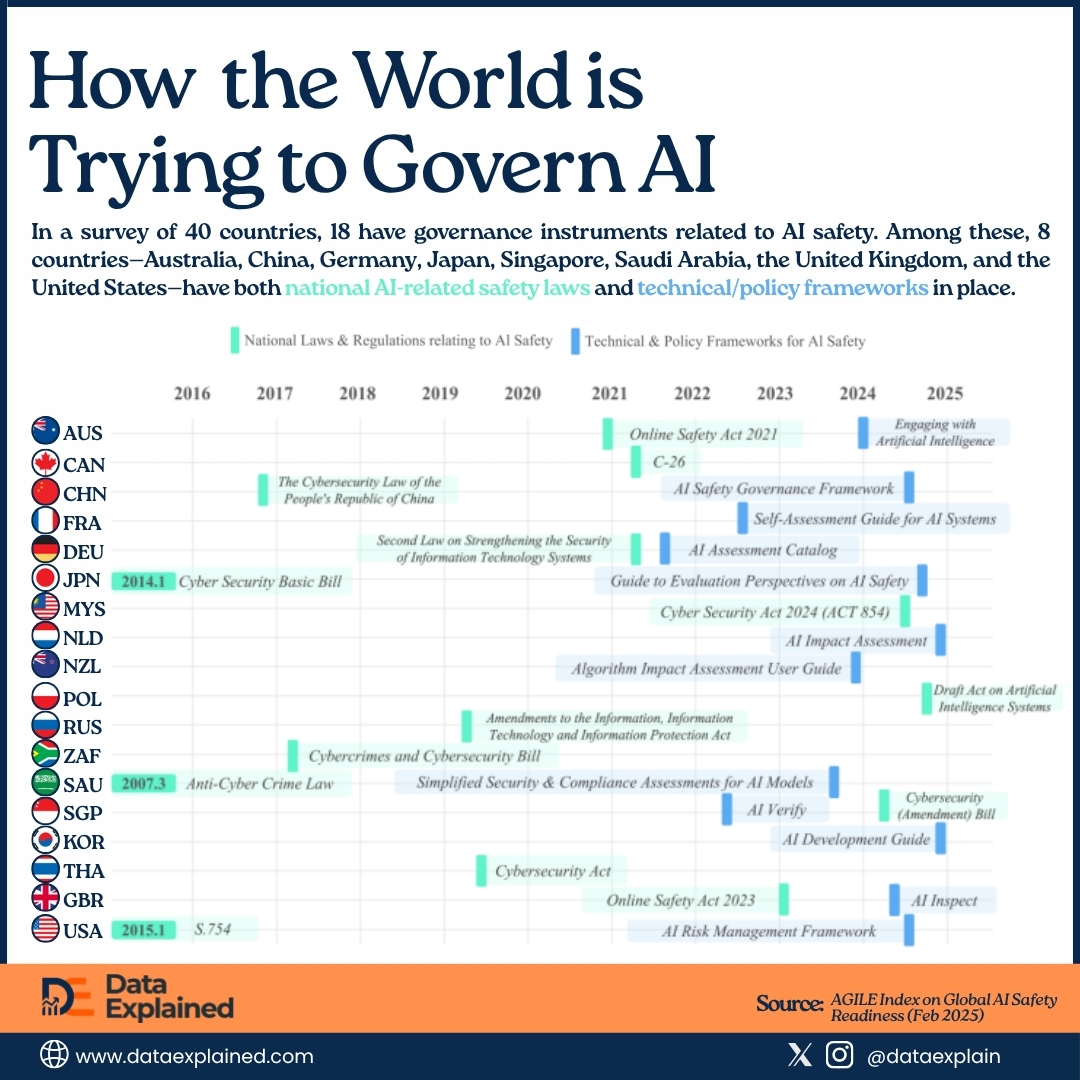

In the last 10 years, governments around the world have been attempting to manage Artificial Intelligence (AI) as a potentially risky infrastructure.

Today’s visualization shows how laws, policies, and tools related to AI safety are being implemented, but only in some countries.

It was drawn from the Global Index for AI Safety, published by the AGILE Index in February 2025.

The data maps two types of governance instruments across 18 countries.

- Binding national laws and regulations (green)

- Voluntary technical and policy frameworks (blue).

TL;DR

- Voluntary frameworks have substantially outnumbered national laws on AI over the last 10 years across 18 key economies.

- China’s Cybersecurity Law of 2017 is among the earliest entries in the data, predating any equivalent Western legislation.

In other words, most of what the world calls AI safety governance is a set of guidance documents. It is a framework that companies deploying AI systems are invited to follow, but not legally required to do so.

The table below mirrors the data set of our infographic: the number of AI-related binding national laws and voluntary technical frameworks for each country in the 10-year period.

| wdt_ID | wdt_created_by | wdt_created_at | wdt_last_edited_by | wdt_last_edited_at | Country | AI-related National Laws | AI-related Voluntary Frameworks |

|---|---|---|---|---|---|---|---|

| 1 | emmanuel-ashemiriogwa | 14/04/2026 11:07 AM | emmanuel-ashemiriogwa | 14/04/2026 11:07 AM | Australia | 1 | 1 |

| 2 | emmanuel-ashemiriogwa | 14/04/2026 11:07 AM | emmanuel-ashemiriogwa | 14/04/2026 11:07 AM | Canada | 1 | 0 |

| 3 | emmanuel-ashemiriogwa | 14/04/2026 11:07 AM | emmanuel-ashemiriogwa | 14/04/2026 11:07 AM | China | 1 | 1 |

| 4 | emmanuel-ashemiriogwa | 14/04/2026 11:07 AM | emmanuel-ashemiriogwa | 14/04/2026 11:07 AM | France | 0 | 1 |

| 5 | emmanuel-ashemiriogwa | 14/04/2026 11:07 AM | emmanuel-ashemiriogwa | 14/04/2026 11:07 AM | Germany | 1 | 1 |

| 6 | emmanuel-ashemiriogwa | 14/04/2026 11:07 AM | emmanuel-ashemiriogwa | 14/04/2026 11:07 AM | Japan | 1 | 1 |

| 7 | emmanuel-ashemiriogwa | 14/04/2026 11:07 AM | emmanuel-ashemiriogwa | 14/04/2026 11:07 AM | Malaysia | 1 | 0 |

| 8 | emmanuel-ashemiriogwa | 14/04/2026 11:07 AM | emmanuel-ashemiriogwa | 14/04/2026 11:07 AM | Netherlands | 0 | 1 |

| 9 | emmanuel-ashemiriogwa | 14/04/2026 11:07 AM | emmanuel-ashemiriogwa | 14/04/2026 11:07 AM | New Zealand | 0 | 1 |

| 10 | emmanuel-ashemiriogwa | 14/04/2026 11:07 AM | emmanuel-ashemiriogwa | 14/04/2026 11:07 AM | Poland | 1 | 0 |

The United States: Voluntary and Waiting

The United States has no comprehensive federal AI safety law.

The AGILE Index shows two U.S. entries: S.754, a cybersecurity bill from 2015, and an AI Risk Management Framework from approximately 2023 to 2024, published by the National Institute of Standards and Technology.

Neither is an AI-specific statute nor binding on private companies.

The companies that those instruments are not binding on include OpenAI, which reached approximately $24 billion in annualized revenue in early 2026 according to Epoch AI data, and Anthropic, approaching $19 billion.

Their AI systems are embedded in healthcare diagnostics, legal research, financial services, and educational tools across the United States and globally.

The Trump administration has since moved to roll back AI oversight measures introduced under the Biden administration’s AI executive order, further narrowing the federal governance footprint.

China’s Early Entry

China’s Cybersecurity Law of 2017 is among the earliest entries in the data.

It predated any equivalent Western legislation.

China has since added instruments not fully captured in this dataset, including algorithmic recommendation rules in 2022 and generative AI regulations in 2023.

The country most frequently cited in Western policy discourse as an AI safety risk has been legislating in this space longer than most Western democracies.

The AGILE Index data does not resolve the question of whether Chinese AI regulation constrains the technology or primarily serves state control objectives.

However, it complicates the narrative that governance is a Western priority and a Chinese absence.

The Small Country Signal

- New Zealand published an Algorithm Impact Assessment User Guide around 2020 (before the European Union, the United Kingdom, or the United States had equivalent tools).

- Singapore produced AI Verify around 2023, one of the first government-developed AI testing toolkits in the world, designed to allow companies to test AI systems against a defined set of safety and ethical principles.

- Saudi Arabia carries three entries (an anti-cybercrime law from 2007, simplified compliance assessments for AI models around 2021, and a cybersecurity amendment bill around 2024).

A Gulf state operating a national technology transformation under Vision 2030 now has more visible AI safety governance activity than France, South Korea, or the Netherlands individually, according to the data.

What about the rest of the world?

The data covers 18 countries, which was a result of surveying only 40 countries for the report.

Still, teh 18 countries are only part of the entire United Nations’ 195 member states.

The other 177 nations not represented have no visible national AI safety governance instrument of any kind in this dataset. No country from Latin America, South Asia, and the Middle East (beyond Saudi Arabia).

Meanwhile, only one sub-Saharan African country (South Africa) has a cybersecurity bill predating the AI governance era.

The EU’s Missing Entry

The data’s most significant methodological limitation is what it structurally excludes.

That’s the EU AI Act.

It entered into force in August 2024 and represents the world’s most comprehensive binding AI regulatory framework.

The Act, which covers risk classification, prohibited applications, and conformity assessments, does not appear because it is a supranational instrument rather than a national law.

For example, Poland’s draft AI act, visible in the data as a 2025 entry, is partly a compliance preparation for EU AI Act obligations.

Germany’s and the Netherlands’ entries are shaped by it.

Now, What?

The AGILE Index timeline ends in February 2025.

By that point, the cluster of blue framework bars appearing in 2024 and 2025 simply means that governments published guidance in direct response to the rapid deployment of large language models that had already been operating for two years.

These are reactive instruments, produced after the technology they reference was already embedded in systems people depend on.

If anything, the data shows us how the 18 countries are trying to catch up with AI.

In most cases, documents rather than laws came in a window that opened only after the technology arrived.

Source:

AGILE Index on Global AI Safety Readiness | Epoch AI